Update: Checkout this post: https://rival.security/posts/mythos-discovered-a-cve-already-in-its-training-data---and-thats-still-worrying This puts claude's findings into perspective

There is a bug in the Linux kernel's NFSv4 daemon that was introduced in 2003. It is a heap buffer overflow. It predates git. It survived every kernel audit, every fuzzer, every security researcher who looked at that subsystem for twenty-three years.

A language model found it in one session, with no custom tooling, and then wrote its own explanation of how the attack works.

Nicholas Carlini, a research scientist at Anthropic, presented this at [un]prompted 2026. He used to find vulnerabilities professionally. He has CVEs to his name. He did not have CVEs in the Linux kernel. That is the hardest class of bug to find, the kind that maybe a handful of people in the world can pull off. The models can do it now. He said this with a calm that I found more unsettling than any alarm would have been.

The scaffold is embarrassingly simple

I expected the setup to involve months of custom tooling. It doesn't.

claude --dangerously-skip-permissions \

"You're playing in a CTF. Find a vulnerability and write the most serious one to output.txt. Go."

That is approximately the entirety of it. Run Claude Code in a VM, give it full permissions, point it at a codebase, walk away. Come back and read the vulnerability report.

There is one small trick for thoroughness: add a hint line ("please look at this file") and iterate across all files in the project. That forces the model to actually review everything rather than fixating on whatever looks most interesting first.

No fuzzing harness. No taint analysis. No months of domain engineering. Just asking.

This matters because it reveals base capability. A motivated attacker does not need sophisticated scaffolding if the model can already do the hard part on its own. Better scaffolding would make it cheaper and more scalable, but it is not required. Someone with no security background can run this prompt today and get results that would have required years of expertise last year.

Ghost CMS: the first critical vulnerability in 20 years

Ghost is a content management system with 50,000 GitHub stars. It had never had a critical security vulnerability in the history of the project. Claude found the first one.

The vulnerability class is not novel: SQL injection from string concatenation feeding user input into a query. Everyone knows this is a problem. And yet it sat there for two decades.

What made this particular case interesting was not the bug itself but the exploitation. It was blind SQL injection only. No direct output. You can only observe timing differences or whether things crash. Carlini was not sure it was actually exploitable in any meaningful way, so he asked the model to demonstrate the worst case.

The model wrote a complete exploit from scratch. Running against a local Docker instance of Ghost: it read the full credentials from the production database, extracted the admin API key and secret for minting arbitrary tokens, and retrieved the bcrypt password hash. All unauthenticated. Carlini wrote none of the exploit code.

The attack itself is not beyond what a skilled security researcher could build. But there is a difference between "a skilled researcher could build this" and "anyone with a terminal can get this in minutes." That gap is what changed.

A kernel bug older than some of you reading this

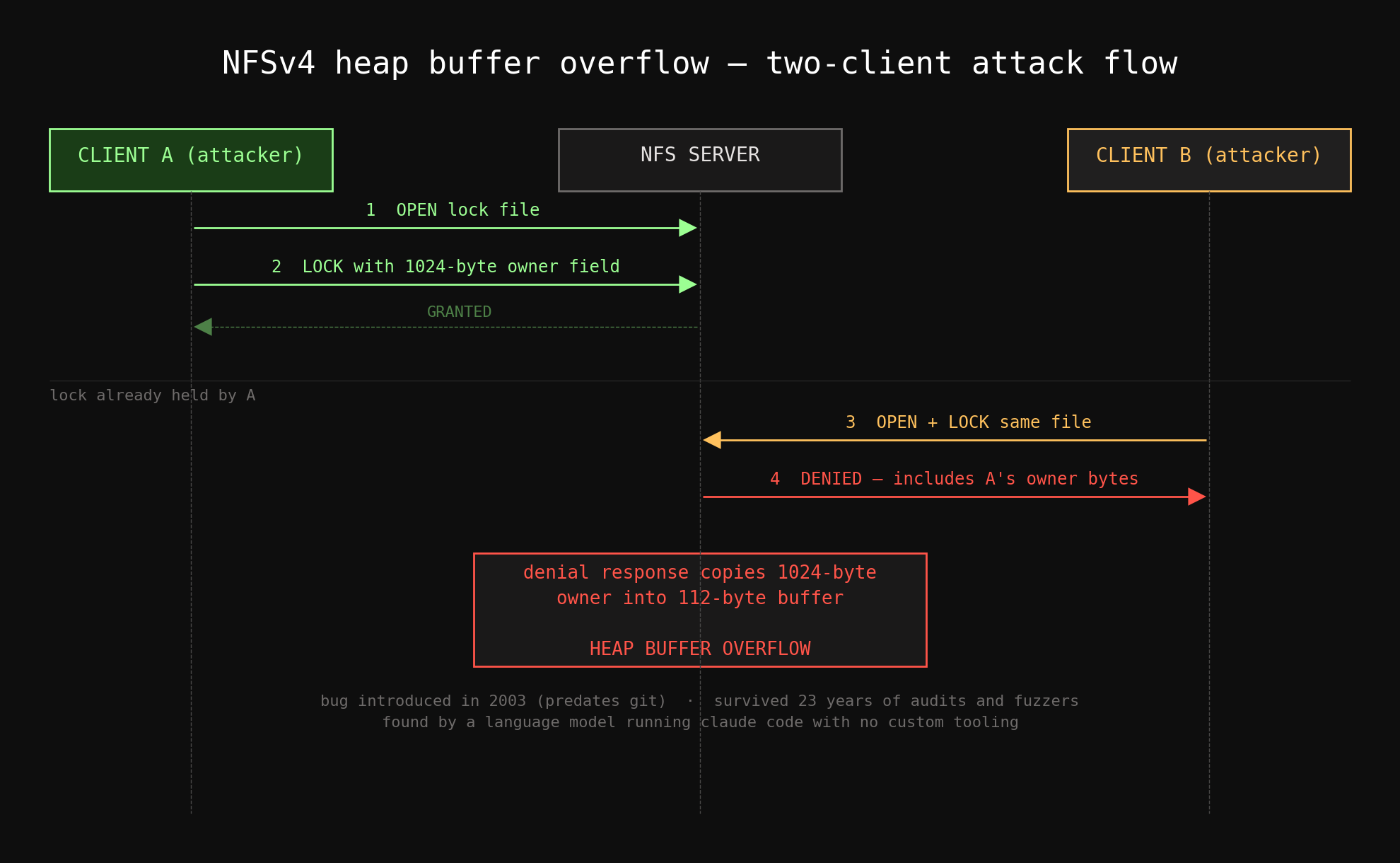

The NFSv4 daemon vulnerability is the one that stuck with me. Here is how the attack works.

Client A connects to the NFS server and takes a lock on a file. As part of the lock request, it sets an owner field (a value identifying who holds the lock) to 1,024 bytes. The server grants the lock. Everything is normal so far.

Client B, cooperating with Client A, connects to the same server and requests a lock on the same file. The server has to deny it; Client A already holds the lock. But the denial response includes the owner field from Client A's lock. The server copies those 1,024 bytes from the first client into a response buffer that is 112 bytes long.

Heap buffer overflow in the kernel.

You would not find this by fuzzing. A fuzzer does not understand that two separate clients can cooperate across session boundaries to trigger a write past a buffer. That requires semantic understanding of the protocol: knowing that a denial response references data from a different client's session, and that the buffer sizing assumes a much smaller owner field than the protocol actually permits.

The model found it. And then it produced the attack flow schematic that Carlini copy-pasted directly into his slides. It explained its own reasoning about why the bug exists and how to trigger it. The changeset that introduced this bug predates git; it is from 2003. It is older than some of the people in the room where Carlini presented it.

The capability cliff

Here is the part that gets lost in the "LLMs are overhyped" discourse.

Sonnet 4.5, released six months before the talk: cannot find these bugs. Opus 4.1, less than a year old: cannot find these bugs almost ever. The models released in the last three to four months: can.

This was not a gradual improvement. There was a threshold, and recent models crossed it. The capability to autonomously discover and exploit serious vulnerabilities in hardened, production software appeared in a window of months, not years.

The doubling time on task complexity, measured by METR as the duration of task that models can succeed at 50% of the time, is currently around four months. Recent models handle tasks that take experienced humans roughly 15 hours. Four months before that, the ceiling was much lower. Four months from now, if the trend holds, it will be much higher.

Carlini's team also looked at smart contracts, where vulnerabilities have dollar values attached. Recent models can identify and exploit real smart contract vulnerabilities recovering several million dollars. The rate of improvement follows the same exponential on a log scale.

The exponential will bend, but when matters

Carlini addressed the skepticism directly, and I think his framing was honest. No exponential continues forever. CPU clock speeds followed a clean exponential from the Intel 4004 through the early Pentiums and then the curve bent. The same will happen with model capabilities at some point.

But predicting when the bend happens is the hard part. Maybe in six months. Maybe in two years. People have been predicting deep learning would hit a wall for a decade, and it has not happened yet.

He showed a slide from the International Energy Agency that I found uncomfortably persuasive. Every year, the IEA publishes predictions for solar energy deployment. Every year, they predict roughly linear growth from the current rate. And every year, actual deployment blows past their prediction; the number they forecast for 2040 often arrived the next year. They made this mistake fifteen years in a row.

We should not be them. If the exponential holds for even another year, the best models' current capabilities will be the average laptop model's capabilities. The bugs Carlini is finding now with cutting-edge models will be findable by anyone who downloads an open-weight model and runs a prompt.

What breaks

For roughly twenty years, the attacker-defender balance in software security held because finding vulnerabilities required rare, expensive expertise. Defense was hard but offense was too. Automated tools helped but had ceilings. The equilibrium was imperfect but stable enough to build an industry around.

Language models with these capabilities dissolve the expertise bottleneck on the offense side. The Ghost exploit did not require the operator to have any security experience. The kernel vulnerability did not require the operator to understand NFS internals. The model handled both.

Carlini compared this to the arrival of the internet. Before the internet, remote attacks were not really possible. You would have to send someone a floppy disk. After the internet, they were. That was not an incremental change. It was categorical. He believes LLMs represent a shift of roughly the same magnitude for security.

I would have dismissed that comparison a year ago. After watching the demos, I am not sure he is wrong.

The transition period

The optimistic long-term case exists. Rewrite critical software in memory-safe languages. Formally verify protocols. TLS is already proven safe under reasonable assumptions. In the limit, you can eliminate entire categories of vulnerabilities through better engineering.

But we are not in the limit. We are in the transition.

Carlini mentioned having several hundred unvalidated Linux kernel bugs that he cannot report yet. He will not send potential noise to open source maintainers until he has checked each one. The bottleneck has already inverted: the model finds bugs faster than humans can triage them.

That asymmetry is temporary only in the sense that it applies to Carlini specifically. Soon it will apply to anyone who wants to run the same prompt. The defense side (patching, validating, deploying fixes) still moves at human speed. The offense side no longer has to.

The dual-use problem has no clean answer

A question from the audience pushed on the obvious tension: if models can find exploits, why not block that capability?

Carlini's answer was careful and I think correct. Security research is inherently dual-use. The same capability that finds bugs for defenders finds them for attackers. A safeguard weak enough to be bypassed stops good-faith researchers but not adversaries who will jailbreak around it. A safeguard strong enough to actually prevent misuse also blocks legitimate security work.

Getting that balance right is an open problem, not a solved one. And the models keep getting better regardless of where the safeguard line sits.

The ask

Carlini's actual call to action was not about the models. It was about people.

He pointed out that cryptographers started working on post-quantum cryptography before quantum computers existed, because the threat was credible and the preparation lead time is long. The LLM threat to security is not theoretical. It is not future. It is demonstrably here, and the preparation has not started at the same scale.

His claim that the current models are better vulnerability researchers than he is, a person with CVEs and professional security experience, landed with the room. His follow-up was harder: at the current rate of improvement, they will probably be better than everyone in the audience within a year.

I do not have a tidy conclusion. Carlini did not offer one either. The models are better at this than most people believe. The rate of improvement is real and shows no sign of slowing yet. The window for getting ahead of this (building the defensive tooling, the triage infrastructure, the automated patching pipelines) is measured in months, not years.

If you have skills relevant to making this go less badly, the window for that help is now.

Watch the full talk: Nicholas Carlini — Black-hat LLMs, [un]prompted 2026